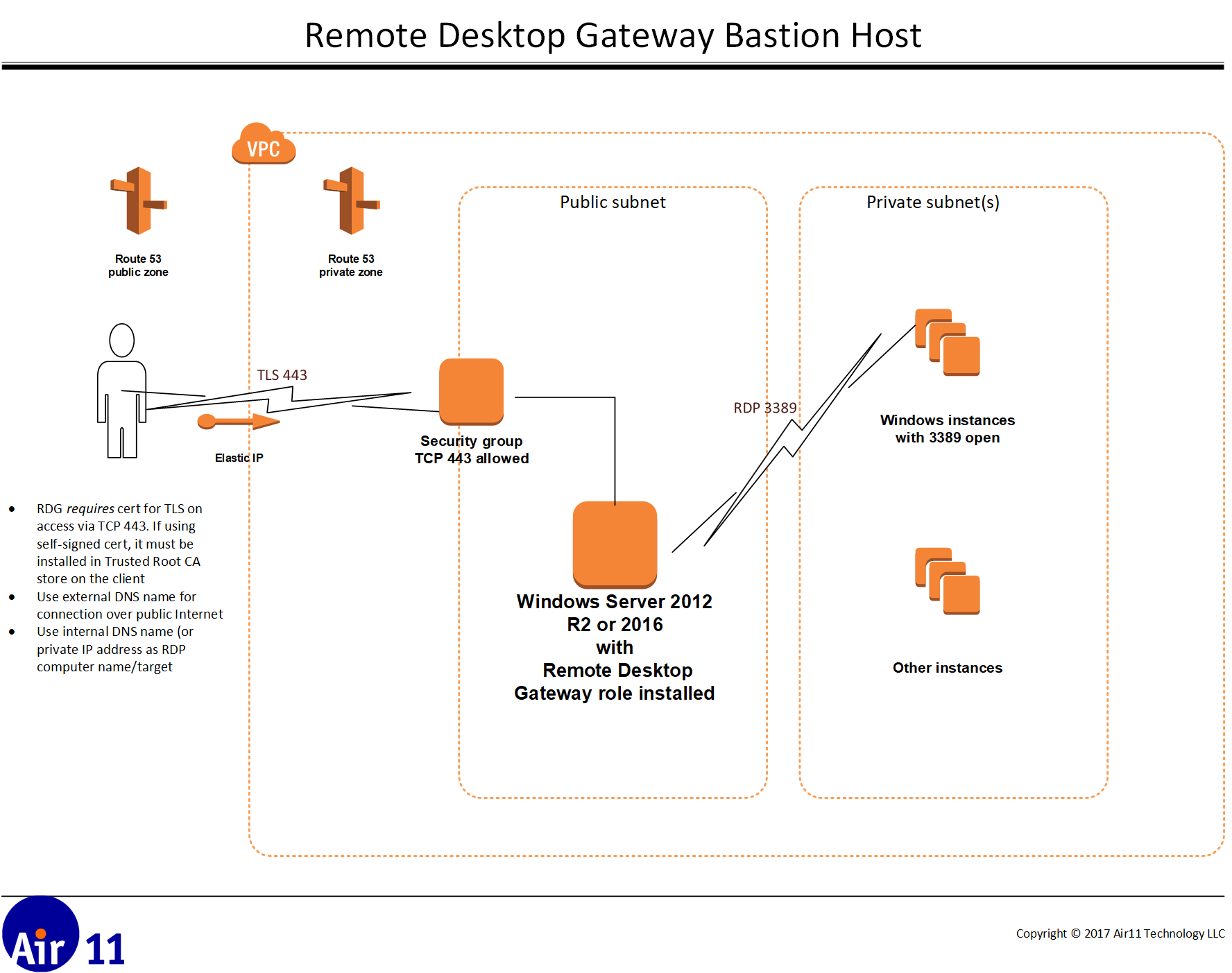

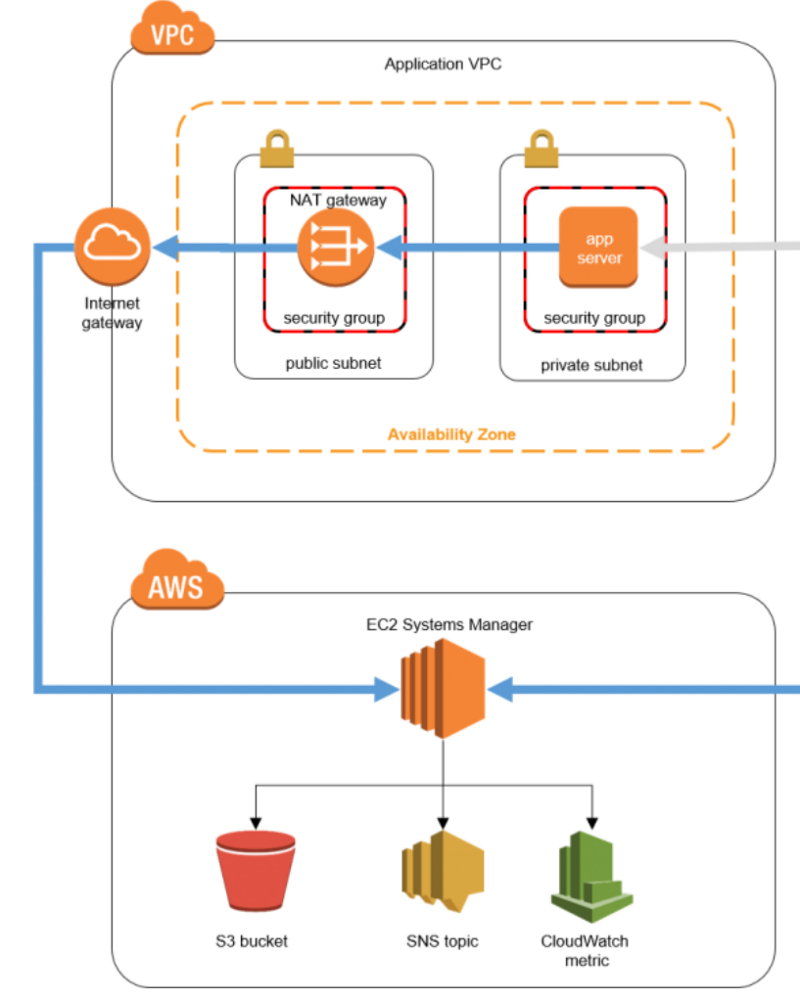

This, if nothing else, is worth moving from ec2.py even if you don’t have to.Ĥ. The filter on lines 3–5 means all other (read legacy) hosts are ignored. All my ansible managed ec2 instances are tagged Ansible:True. Specify this file as your inventory in ansible.cfg. The inventory it produces for Ansible depends on the settings you feed into the plugin.Ĭonsider this aws_ec2.yml file: 1 plugin: _ec2Ģ. This is great, but documentation is sketchy. Great for dynamic inventories and Amazon produced such a file in ec2.py.Įnter Ansible Core and ec2.py was replaced with the Amazon.aws inventory plugin. It was also possible to use an executable file and the inventory was created from the standard output from this file. This was usually a simple ini or yaml file. You may be familiar with Ansible’s inventory file that describes the hosts Ansible is going to manage. Part Two: Ansible and Amazon.aws inventory plugin But we’ll come back to that, assume for now that it’s working as intended. From the bastion server the destination host is unknown. To Ansible, or anything else connecting via ssh, the connection is essentially transparent. It does the second ssh step on the bastion host for us. This does the things we might expect for accessing our EC2 hosts, defines the private key to use, adds the domain name for us etc, but for all hosts except our bastion it proxys through this host to the desired location using the ProxyJump setting. Further this two-step approach is no good if we want to connect to our EC2 instance from Ansible (or heaven help us Jenkins).Consider the following ssh/config file: Host ec2-* !ec2-bastion Running nothing more than ssh, if it can be helped, to reduce its security exposure. The bastion server should be the minimum installation possible. While this is still possible it misses the point. A “jump-box” if you will in an old parlance. You may be thinking that this bastion server is just a stepping stone into your collection of EC2 instances, whereby you open a shell onto the bastion host and then ssh again to your final destination. (You should even whitelist access if you are able). This host is your bastion and only it requires an elastic IP address, a single DNS record in Route53 and external ssh access. Security: Trying to secure and audit a series of hosts can be tiresome.Ī far better approach is to secure one host and have this act as a gateway to the others restricting access to your hosts to this one host. Elastic IP: IP addresses that stay static and are accessible over the internet are a resource that cost money and, sooner or later, expect to run out of them.ģ. Sure you can have some system that puts them into Route 53’s DNS for you but then it leads to the second problem.Ģ. Managing DNS/IP: Somewhere, someone has to maintain a list of these EC2 hosts and their addresses.

You could access each host directly, connecting to each via ssh from your desktop. Rather you have a series of EC2 instances that you manage by Ansible. Let’s also assume that you haven’t yet moved all your applications to the sunny uplands that are server-less or managed Kubernetes clusters. But for the time being let’s assume it is. But alas, the world is a hateful place full of bad people wanting to steal your stuff. Part One: introductionĪs I’ve often said “security is a pain”.

This post is one of our #TechTalk focused posts, that we’ll be focusing on different areas of our work in the coming months. Andrew, our DevOps engineering lead, talks about his experiences with Ansible and using a Bastion server to AWS EC2 instances, when working on our infrastructure development.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed